Co-Founding and Designing 0 to 1 an In-Home Monitoring System for Older Adults to Prevent Tragedies From Becoming Emergencies

Co-founder first, designer second.

Part of a 10-week startup incubator with 2 devs and a PM. We pitched every week, new idea, new slides, hundreds of user interviews and scrapped ideas. Super fun to be that scrappy and messy.

oasis — product overview

What if they fall? What if they choke? What if, what if, what if…

Younger family members spend a lot of time worrying about older loved ones who live on their own. To monitor them, families have to watch 24/7, causing constant stress and anxiety.

So we designed a vision-based in-home monitoring system that can detect falls and other emergency situations, to help older adults maintain their autonomy while providing peace of mind for their loved ones.

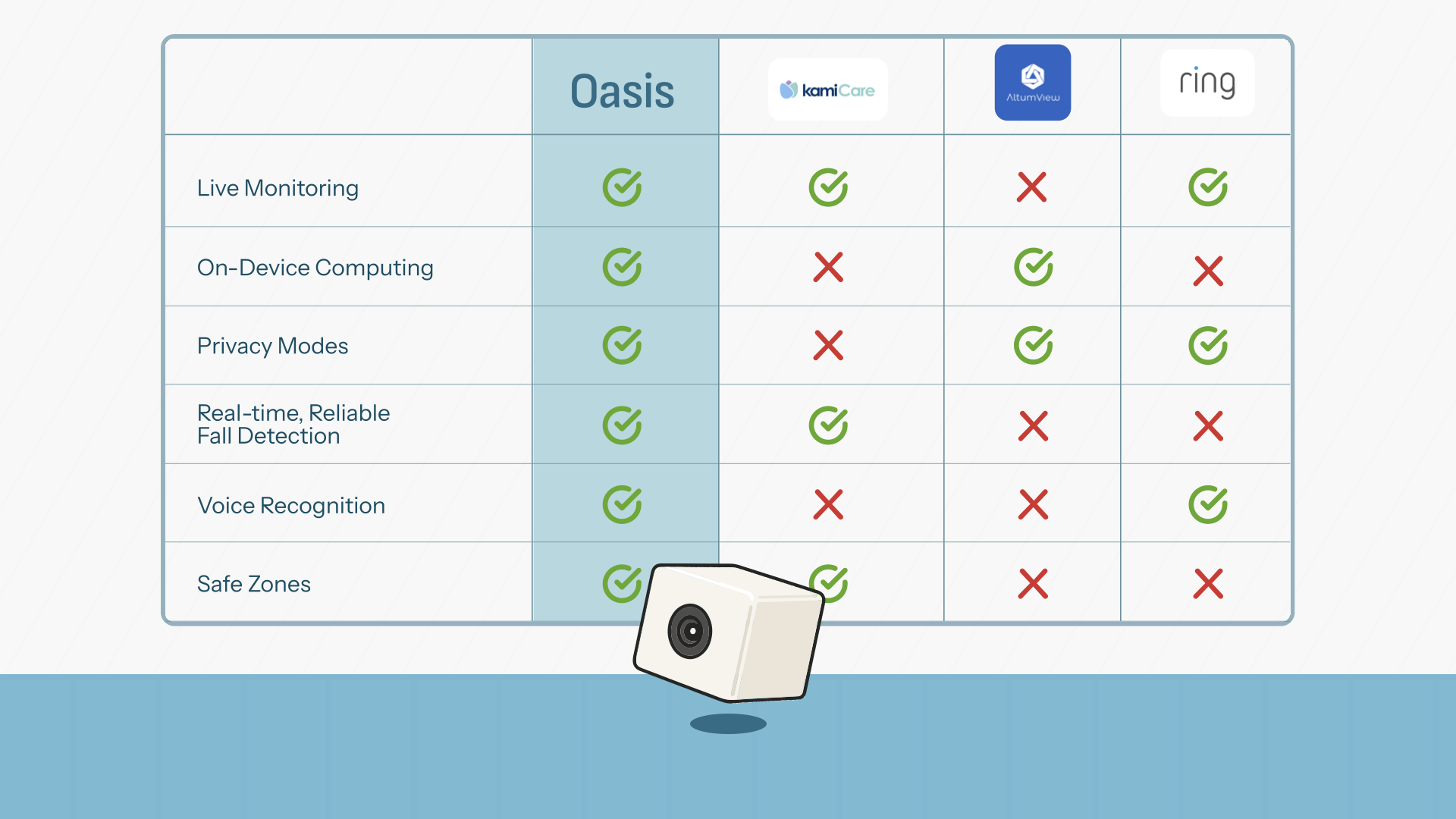

Oasis vs. existing solutions — on-device processing, privacy modes, fall detection, voice recognition, and safe zones.

Older adults want to maintain their autonomy and privacy, but that causes a lot of worries for families.

22+

User interviews

In English and Korean

Dancing With Grandpa

57

Survey responses

Ages 18–65

40+

Secondary sources

Articles, reports, databases

47

Raw insights

Across 10 themes

Research insights — drag to explore

Interviews conducted at senior centers, home visits, and Korean community centers in LA

Autonomy is one of the most important priorities for older adults age 60-70. Any solution that visibly compromises independence will be rejected, regardless of how well it works.

Current solutions like wearables or caregivers cause friction. Most forget to wear them or find them uncomfortable. Caregivers infringe on independence.

Jasmita's grandma wants to continue to live alone, but her health is deteriorating. She was recently diagnosed with a neurodegenerative disease. They set up baby monitors but those need to be watched 24/7, which means Jasmita's parents are constantly worried. This is where Oasis steps in…

An in-home camera monitoring system that uses computer vision to detect falls, signs of unresponsiveness, and choking in real-time.

Multiple cameras cover danger zones around the house, connecting to a central node that processes the visual data to determine if an incident happened.

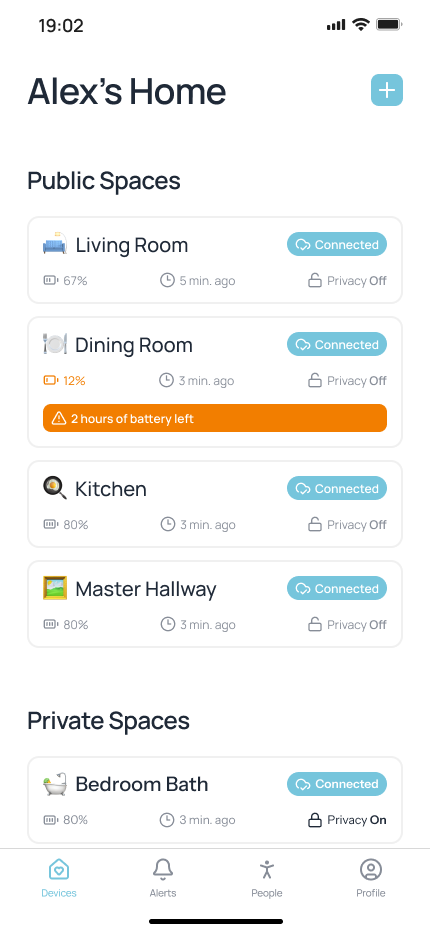

Home screen showing all connected cameras across rooms, with battery status and privacy controls.

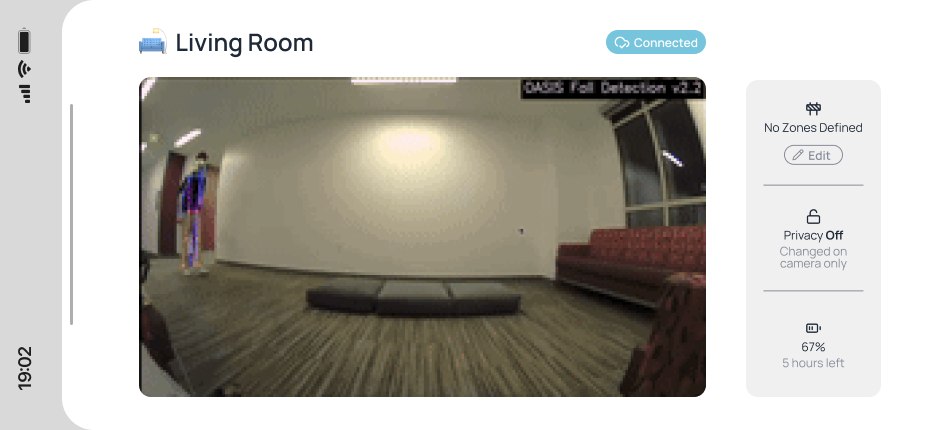

Live camera feed from a room. Caregivers can monitor in real-time. (1/3)

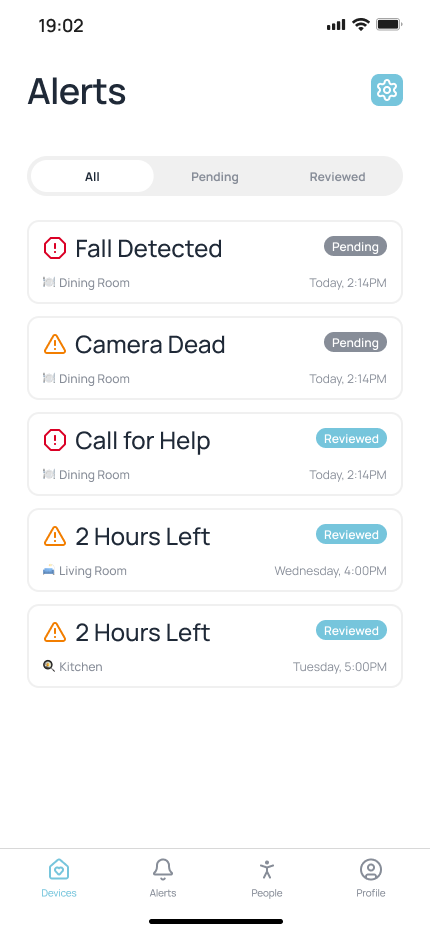

All alerts view — falls, camera issues, and call-for-help events with pending/reviewed status. (1/5)

In-home camera monitors that detect falls have existed, except they're way too expensive.

With recent advancements in computer vision and processing, algorithms are now much more accurate and can detect incidents in real-time, on-device.

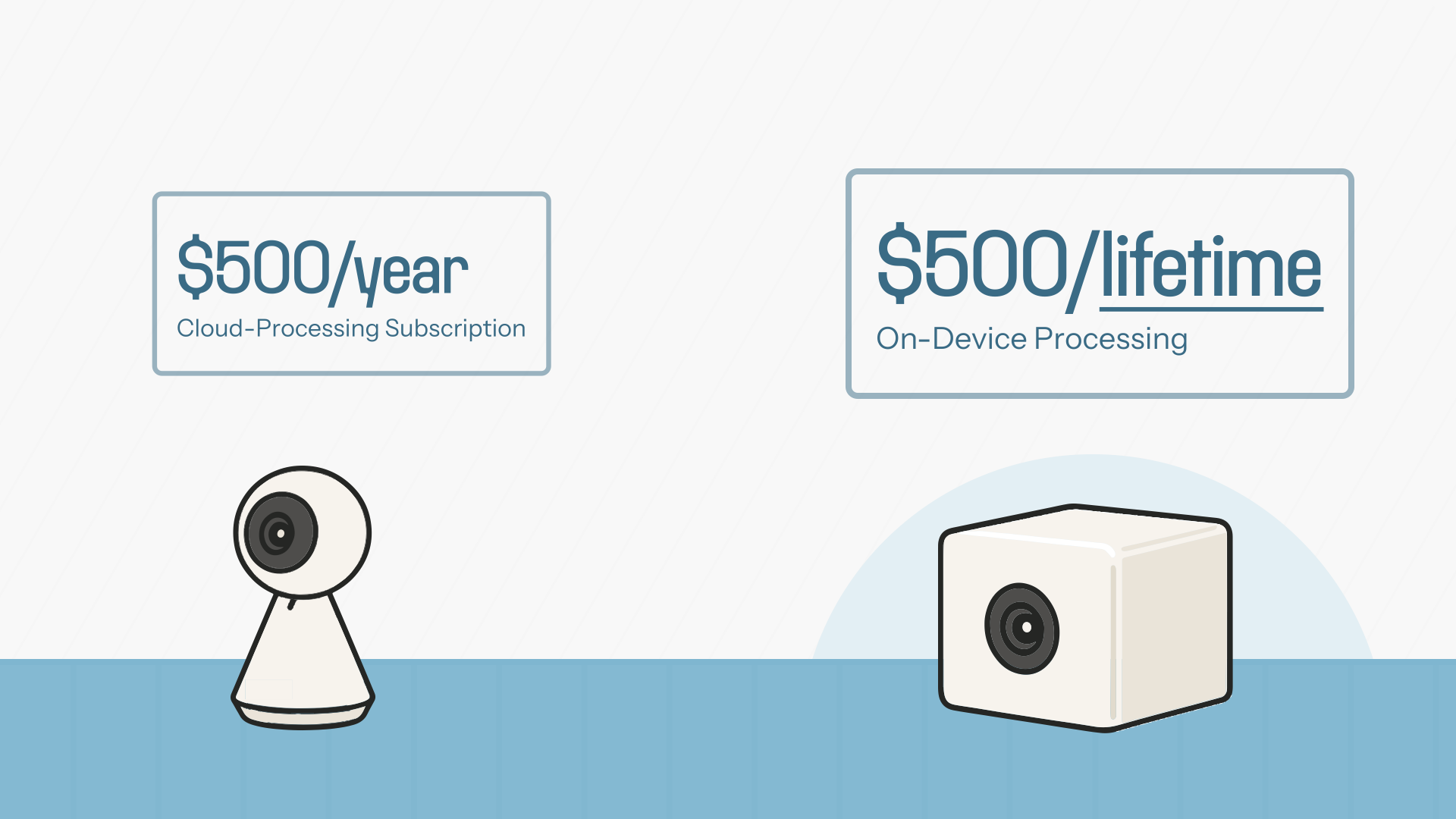

$500/year cloud-processing subscription vs. Oasis at $500 all-time with on-device processing.

Shipped my first product!

I treated user interviews more like user conversations. My interviews yielded a lot more when I wasn't following set questions like "what are pain points in your life right now?" and just enjoyed learning more about another person. A lot of interesting insights I didn't expect came about because I wasn't looking just for "problems" in another person's life.

Designing for hardware is designing for a different medium. With software it's mainly screens but with hardware there are different ways you can communicate and interface through sound, light, and color. It means there's a lot more opportunities to make it more accessible for older adults who may not be as familiar with digital screens.